Report Published November 1, 2017 · 14 minute read

How Higher Education Data Reporting is Both Burdensome AND Inadequate

Wesley Whistle

Each year, prospective students looking to head to college face an important decision about where to make one of the most costly and time-consuming investments of their lives. To guide them through this process, many rely on external resources to help them figure out which school they want to attend. Some may be concerned with tuition and financial aid packages, while others may want more information about the types of careers typical of graduates from a given school. They might be asking about possible majors, how much they might expect to make after attending, or even how likely a person like them is to graduate from that institution. So what can these curious potential students do?

There are a bevy of resources currently available to students and families looking to navigate the ever-growing and complex higher education landscape in the United States. From rankings websites like U.S. News & World Report and Princeton Review, institutional websites, or federal resources like the Department of Education’s College Scorecard, students and their families can find some pieces of information on enrollment, cost and typical debt load, retention, and graduation rates. They can also see how much some students earn after they attend. There’s no doubt that most institutions are working to arm students with more information today than ever before.

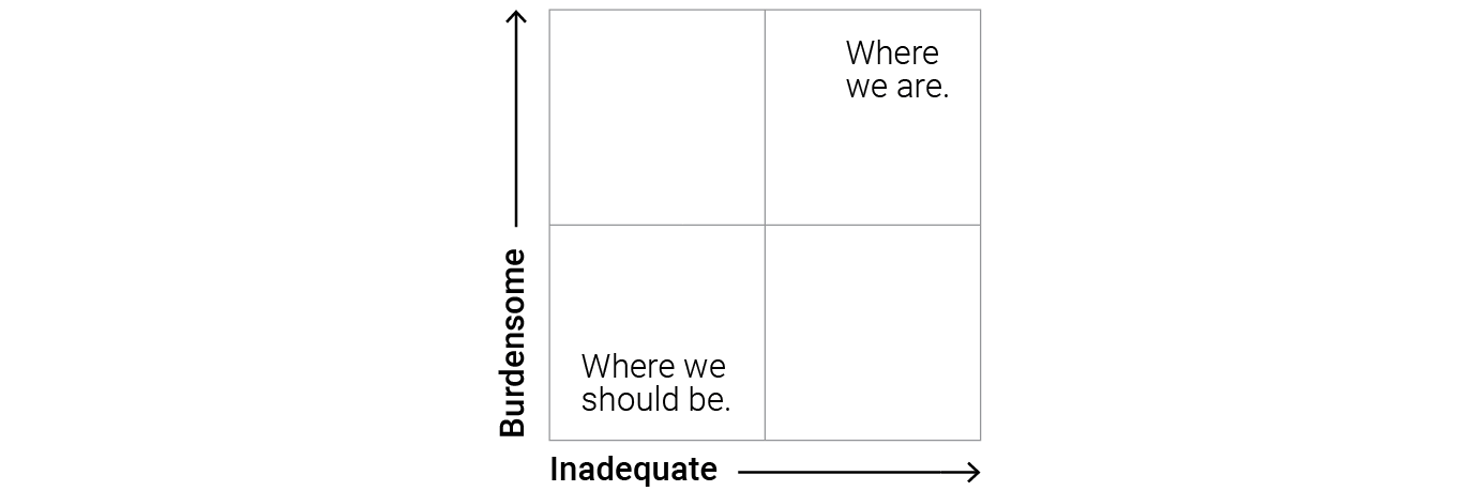

But even while there have been great strides to increase transparency, our higher education data system still falls short. Due to a restriction imposed by Congress in 2008 that prohibits the creation of a federal student-level data system, the data collection process is inadequate at both the front and back ends: institutions are both burdened by a complex data submission process and students and families receive incomplete information as consumers.

It would be one thing if data collection was burdensome for institutions and the federal government but delivered highly useful and valuable information in return to all stakeholders. Or if the data collection process required little effort that produced little actionable data. But right now, we are living in the worst of both worlds—meaning that the data collection process we have is both overly-complicated and delivers subpar data in return. Overturning the ban on collecting student-level data would help reverse this trend by simplifying and streamlining the process for institutions, while also providing better, more complete information for consumers in the higher education marketplace. It’s a win-win for those producing and using higher education data.

Brief History of Federal Data Reporting

Asking institutions to report student outcomes data is critical to providing transparency to consumers who navigate the postsecondary system, as well as tracking the $130 billion investment taxpayers make in higher education. The collection of data by the federal government dates back to 1966, first by the Higher Education General Information Survey, and starting in 1986, by the Integrated Postsecondary Education Data System (IPEDS).1 Over the years, legislation such as the Student Right to Know Act of 1990 and the Higher Education Opportunity Act of 2008 have required institutions to shift reporting from inputs like admissions information to outcomes like graduation and employment in order to better measure student success and outcomes.2 Many external rankings and tools by non-governmental organizations use this same data to share and measure the performance of different institutions. This public data ensures that students are provided with accurate information on institutional outcomes and allows institutions to benchmark and improve student success.

How Higher Education Reporting is Burdensome

Despite the importance of collecting a standard set of key input and output measures from institutions, the implementation of the data reporting process is needlessly difficult, and it often requires institutions to spend more time, money, and personnel on the process than is necessary.

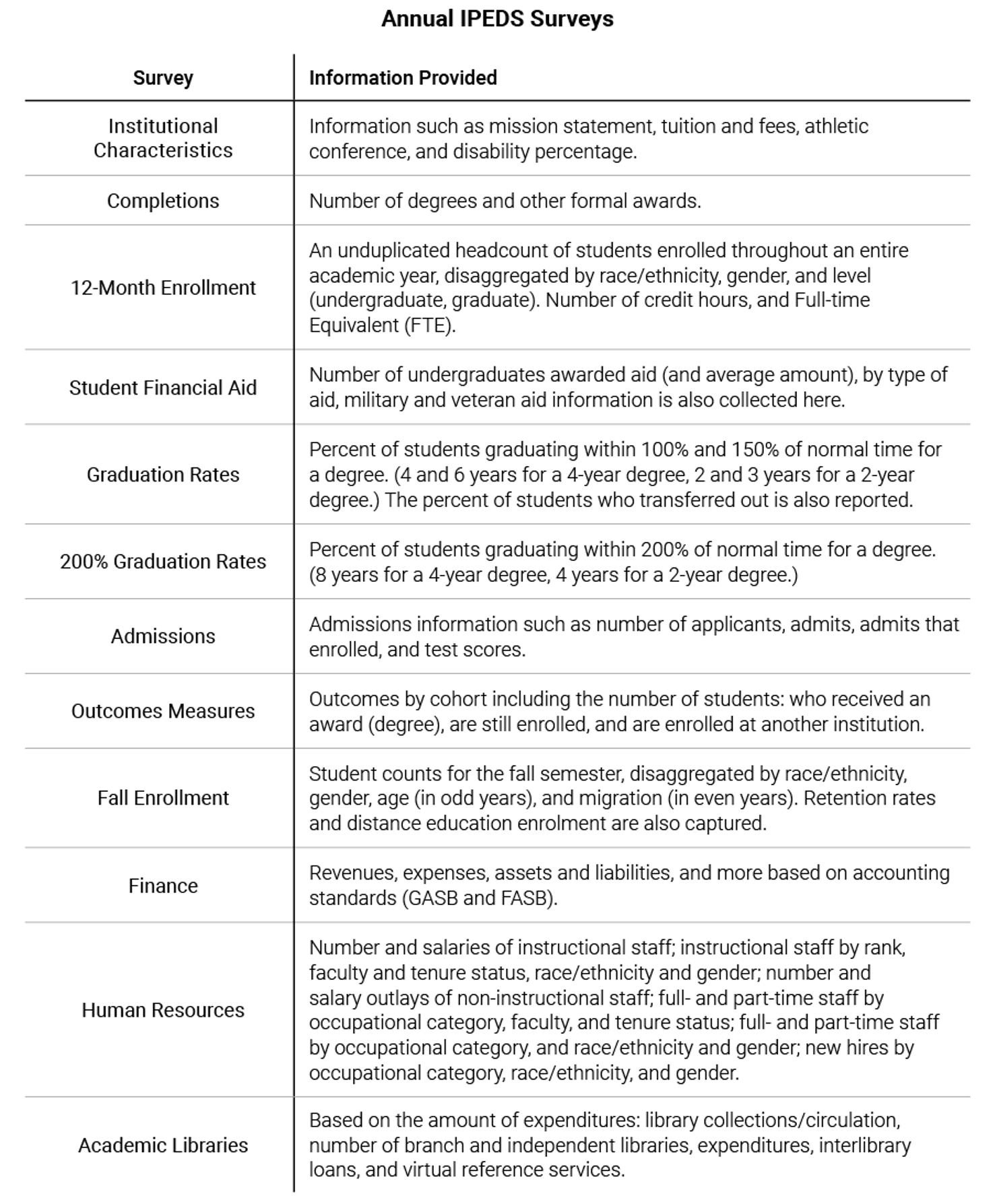

The reporting process requires institutions to complete multiple surveys in IPEDS.

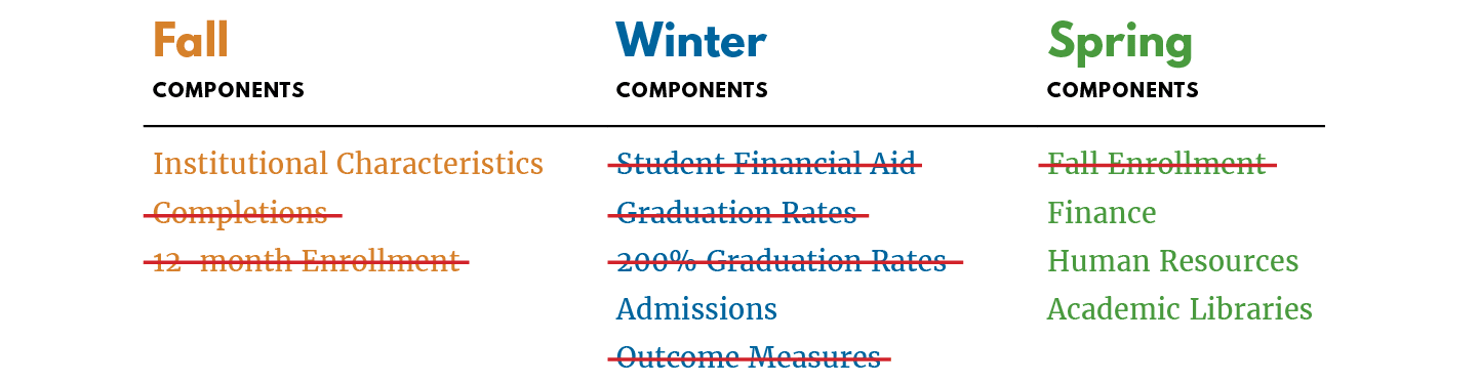

As demand for information on college outcomes continues to grow, institutions are reporting more data now than ever before. Each year, institutions must submit 12 different reports—known as surveys—to the Department of Education (the Department) providing data through IPEDS. Some of these measures also feed into the College Scorecard.3 These surveys require colleges and universities to report aggregate data on metrics like financial aid, graduation rates, and admissions and enrollment numbers, to name a few. According to a new report from the Institute for Higher Education Policy, most institutions are being asked to calculate upwards of 500 metrics in any given year, when disaggregated data are counted.4 This table outlines the comprehensive nature of IPEDS reporting requirements for institutions today.

While much of this information is necessary, aggregating all of these metrics can be highly unwieldy for institutions, especially smaller schools that have fewer resources and limited bandwidth.

The reporting process is duplicative across multiple actors and levels of government.

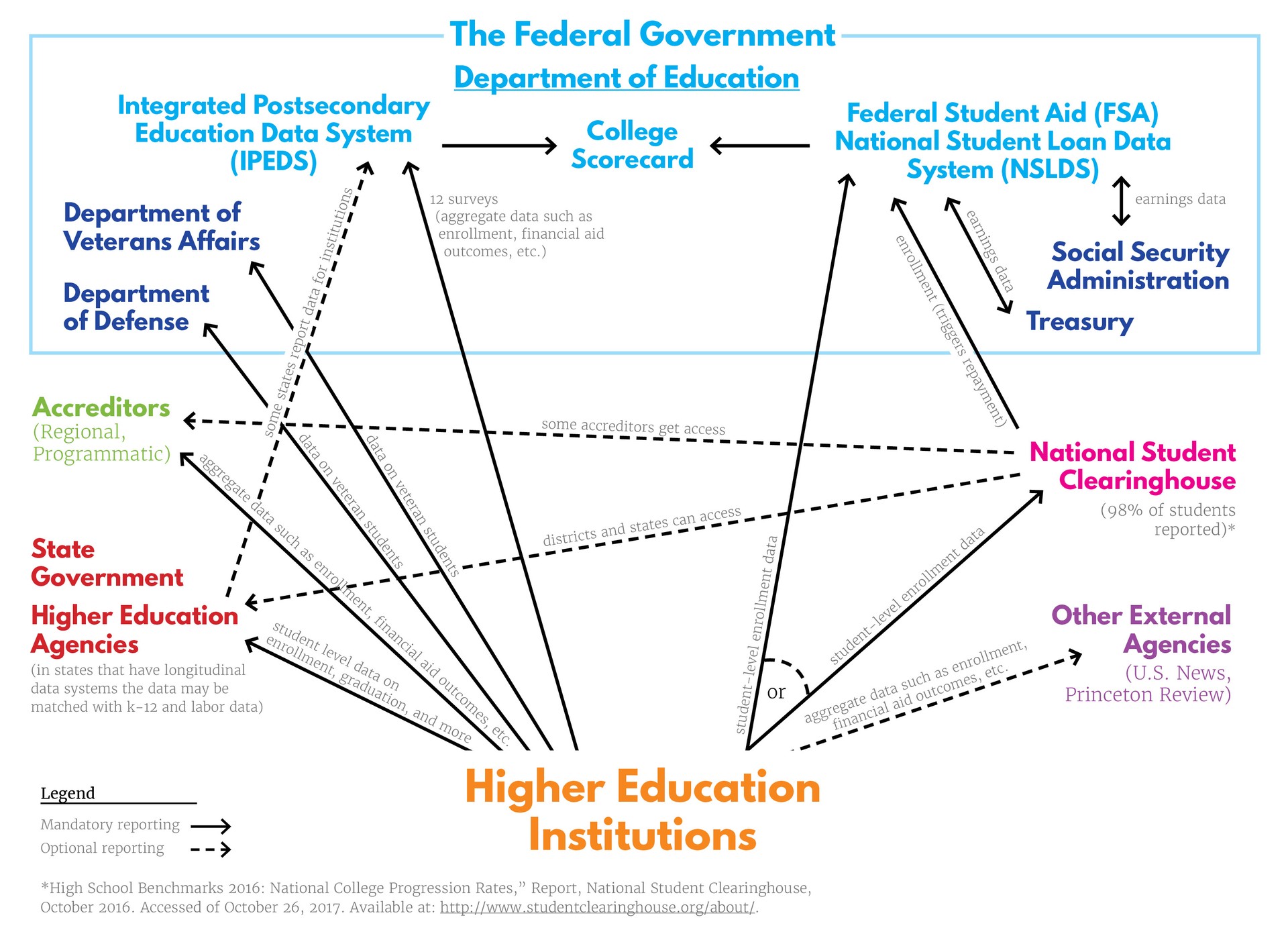

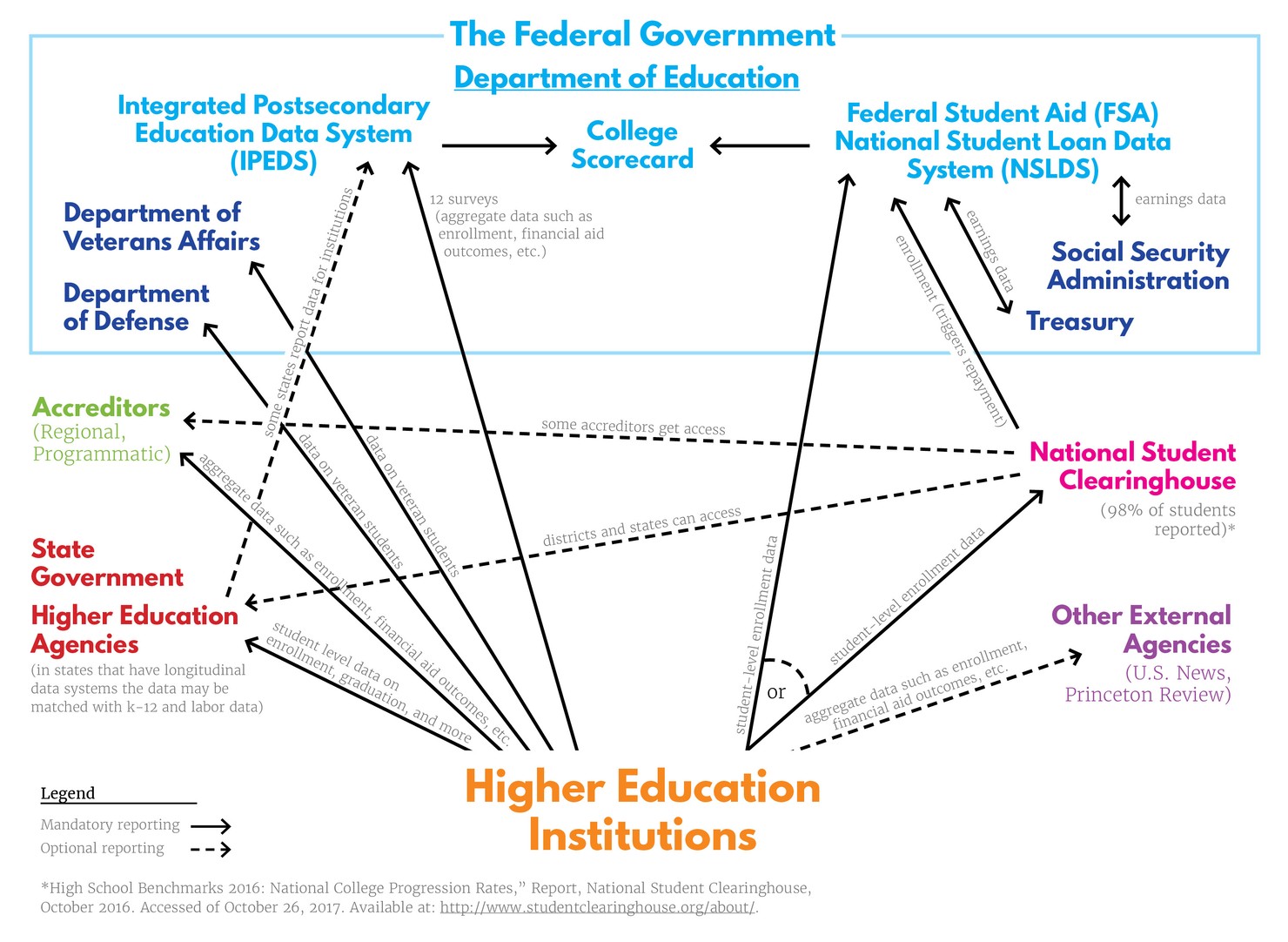

In addition to institutions filling out multiple surveys in IPEDS, they may also be required to report to the federal government in other ways, to their state government, to their accreditor, as well as reporting to external entities such as U.S. News & World Report, Princeton Review, and the National Student Clearinghouse (NSC). Each of these entities require institutions to report information in various ways if an institution chooses to use their services or be included in rankings. One example of this duplicative process is how institutions report their monthly student-level enrollment data—including when a student leaves school, either through graduating or dropping out—to the NSC. The NSC acts as a “middle-man”, who then sends the data to the Department’s National Student Loan Data System (NSLDS), housed at the Office of Federal Student Aid at the Department, who uses this information to determine when students enter into repayment, based on their enrollment status. At the same time, institutions report institution-level enrollment data to IPEDS, another part of the Department. These various agencies at the federal level each have pieces of the data puzzle, but they simply can’t put them together—leaving institutions to spend time re-reporting information the government already has.

Just as institutions report the student-level enrollment information to the NSC and aggregate-level data to the Department, they also report information on military and veteran students to the Department of Defense and Veteran’s Affairs, both outside of the normal IPEDS reporting framework. Much of this data is also duplicated in the 47 states that have a state longitudinal data system that includes postsecondary information. These systems often also require institutions to report information such as enrollment, degrees, majors, and other demographic data, however the way they do so may differ depending on what each state decides.5 This means institutions are continually aggregating, calculating, and duplicating instead of working through a streamlined process that would create more actionable data for all entities involved.

Reporting is costly and time-consuming.

All of this reporting costs colleges and universities valuable time and money. According to Department estimates, institutions will spend nearly one million hours completing the 12 surveys in IPEDS from 2016 to 2019.6 And at a time when many are blaming the rising cost of tuition with administrative bloat, universities are having to hire staff for this increasingly burdensome work. Based on data from the College and University Professional Association for Human Resources (CUPA-HR), it is estimated that four-year, public universities may spend about $10,300 each year filling out just the 12 surveys in IPEDS.7 Not only does this cost institutions, it costs students, too. Money spent on duplicative reporting is money that could be put into academics or student support services that could be used to improve graduation and persistence rates, especially in schools that serve a greater share of low-income students.8 Under the current system, institutions are diverting critical resources to a list of ever-growing reporting needs instead of investing in tools that would actually help improve the outcomes of the students they serve.

How Higher Education Data is Inadequate

In addition to the data collection process being burdensome, it also fails to produce the most actionable information for institutions, taxpayers, and students alike. If the data collection process is going to require institutions to spend time and resources on reporting, it should ensure that all students are counted so that consumers and stakeholders have an accurate understanding of how well institutions are doing across the board.

Current reporting doesn’t give us the full picture.

The first limitation of the data we currently collect is that it fails to provide an accurate representation of how well all students are performing both within and across institutions. First, because calculating employment and earnings data requires student-level data for matches, the Department must exclude the 30% of students who do not receive federal aid.9 This means that post-attendance earnings data that’s publicly available today only takes into account students who received grants or loans from the federal government to attend college. The earnings data also does not include program-level data, giving an average number for all students no matter their major. And, until this year, the Department only had graduation information on first-time, full-time students—an increasingly shrinking portion of the college-going population.10 Because of this limitation, last year federally-reported completion rates only captured 47% of students graduating from postsecondary institutions.11 This year, those numbers include some data on transfer and part-time students, but that data is incomplete, making it difficult to fully understand how well these students are faring.

It should be noted that states have attempted to fill some of the holes in the public data by creating their own longitudinal data systems. However, having state-by-state systems that choose to include whatever metrics they want has left us with a patchwork of information that makes it challenging to understand the long-term outcomes of students on a national scale—and especially in our more mobile economy. Take Kentucky, for example, which created a longitudinal data system that can see how many students transfer, graduate, or leave institutions in any given year. While this is helpful information, the state does not have data for students that attend for-profit institutions within the state or in online programs based elsewhere (like the University of Phoenix, for example, which operates out of Arizona but has many students attending in Kentucky), or for students who transfer out of state. This makes it impossible to compare outcomes across the public, private non-profit, and for-profit spheres and leaves universities and the state in the dark if students find work across state lines in nearby cities like Cincinnati, Ohio directly across the river from Kentucky.

Higher education data is hard to disaggregate across demographics.

When students are looking at potential colleges, they should be able to understand how likely students like them are to succeed at a given institution—especially since different types of students can sometimes have wildly different outcomes at the same school. However, much of the data we have now is limited when it comes to knowing how well subsets of students are performing within and across institutions. This is because the reporting systems provide most outcomes data in the aggregate, allowing specific types of students to get lost in the averages. So while we have full-time graduation rates and now part-time, transfer, and Pell graduation rates, we do not have the data that allow us to see much of this information across demographics like race, gender, or other characteristics. We can currently see six-year graduation rates by race/ethnicity or gender, but not by race/ethnicity and gender. And the data we currently have also cannot be broken down by transfer and Pell Grant status or part-time and Pell Grant status, or similar combinations. This makes it difficult for low-and moderate-income students to understand the opportunities a college or university may provide to students like them. It also leaves policymakers and taxpayers in the dark when it comes to knowing which institutions are helping to close equity gaps—and which may be exacerbating them.

Institutions serving non-traditional or transient students might not get the credit they deserve.

Our current system puts institutions that predominately serve more transient student populations at a disadvantage. According to research from the National Student Clearinghouse, over one-third of students transferred at least once within six years and almost half of those students changed institutions again.12 Even with recent improvements in the data, many student outcomes are still left unknown because new part-time and transfer data released by the federal government earlier this fall doesn’t allow schools to follow the outcomes for their “transfer-out” population—nor is it a required metric. For example, an institution like Forsyth Technical Community College (FTCC) in Winston-Salem, NC, knows that 26% of their students transferred out, and that 28% of their first-time, part-time students were enrolled at a different institution within 8 years.13 However, neither they nor prospective students have any idea how many of those students actually went on to graduate from another institution. This means that schools serving a predominately transfer-heavy population, like community colleges, are not getting accurate credit for good outcomes of students who go on to complete at four-year schools.

It’s hard for schools to understand students’ economic outcomes and adjust.

Lastly, colleges and universities want to understand how well—or not so well—they prepare their graduates to succeed in the 21st century economy, but right now they lack the right information to do so. Because universities only have access to aggregate-level earnings data (one salary for all students 10 years out), institutions have a limited ability to understand how students in each of their programs are performing after they graduate. This makes it nearly impossible to elevate or improve the performance of individual programs, since graduates can have wildly different occupations and earnings based on their major. This lack of access to economic outcomes information by program makes it harder for institutions to correct course and properly adjust to increase the quality of their programs. And without comprehensive program-level data, students have no way to know how well students in a particular major (i.e. business) fare at one institution versus another.

How Can We Reduce Burden While Improving Quality?

Students, colleges, and taxpayers all want better information. Students need the best information possible when making the choice to pick the school that will be a good investment. Colleges and universities also need the data to paint a clear and accurate picture of how well their students are doing in order to balance the reporting burden. And taxpayers need to know that the $130 billion they send to institutions each year is a worthy investment that continues to increase economic mobility for many Americans.

One way to help ensure that adequate and useful data is captured is to overturn the 2008 ban on federal student-level data, which bipartisan legislation like the College Transparency Act proposes to do.14 For one, eliminating the ban on student-level data would allow institutions to send their data directly to the Department, greatly streamlining the number of surveys they are required to complete and the amount of data they must aggregate on their own. Because the Department of Education would receive the data to fulfill the student components of IPEDS through the student-level collection, some researchers estimate that this change alone could reduce the number of surveys institutions are required to complete from 12 to five, freeing up a significant amount of time and resources for institutions to focus on other needs.15

Additionally, having a student-level data network would also connect the dots between the patchwork of systems and information that is available to consumers today. Students and taxpayers would no longer have to rely on geographically-limited state data systems and instead could understand how well institutions prepare students for the economy no matter where they attended or where they work. Students would also be able to better understand their likelihood of succeeding at a given institution, and institutions could know how well all of their students perform at the program level so they can appropriately target their resources and adjust course.

Conclusion

Deciding where to pursue higher education is a huge decision. It plays a major role in the outcomes of a student’s life. Their postsecondary experience influences the type of career they will have, the money they may make, and the type of life they can ultimately live. Students making this decision should have the clearest data possible, but the current incomplete, disconnected, and duplicative data reporting has made this process more difficult than it needs to be and still leaves holes in how well students are actually faring at institutions across the country. Both students and institutions stand to benefit from a system that reduces burden and provides more actionable data. And with bipartisan legislation in the works to help address these problems, achieving this goal is now within reach.