Report Published October 8, 2019 · 39 minute read

How Outcomes Metrics Can Better Reflect Community College Performance

In the US higher education system, community colleges are heavy lifters: while they make up only about a quarter of the nation’s postsecondary institutions, they serve nearly half of all undergraduate students. But when it comes to evaluating the performance of two-year institutions, traditional outcomes metrics can provide an incomplete picture, failing to account for the multiple missions of community colleges, the diversity of the students they serve, and the variety of educational pathways those students pursue. They often fail to fully measure the true value that community colleges provide—leaving students and taxpayers in the dark about which two-year schools are delivering on their promises and which ones are not.

That’s because on paper, the federal government makes virtually no distinction between two-year and four-year institutions in its required student outcomes reporting. Updates to the federal data system in recent years have injected greater nuance into the conversation around institutional and student-level performance, but there is still much work to be done to expand the availability of usable higher education data and refine the ways we think about and measure success across all schools and for all students.

While both transparency and accountability for student outcomes need to exist for all sectors of higher education, we can and should also recognize that not all institutions are the same. This means that some of the federally collected data points that make sense to report for four-year schools don’t offer as much insight in understanding community colleges, where students are more likely to be older, enrolled part-time, or balancing job and family responsibilities alongside their schoolwork. Far from negating the usefulness of reporting on community college student outcomes, these challenges actually raise the stakes for improving data collection at the federal level and transforming how that data is used.

For a large share of students, the community college in the district or county where they live is the most affordable and accessible option to continue their education—making it crucial that we have appropriate data and standards in place to hold every community college accountable for delivering on its commitments to students and the region it serves. If we really want to help students make informed college choices and experience the powerful economic mobility that higher education can provide, we need to think critically about what success looks like at community colleges and how to measure it in ways that promote institutional improvement.

Policymakers have an opportunity to engage with these questions in the impending reauthorization of the Higher Education Act (HEA) and future reforms to federal higher education data systems. This paper examines how current federal outcomes metrics related to degree completion; transfer performance; the employment, earnings, and debt of former students; and institutional equity gaps can be designed or enhanced to present a clearer picture of community college performance.

Community Colleges 101: The Multiple Missions of Two-Year Colleges

Just over a century after the founding of the first public two-year college in the US, there are now over 1,000 community colleges across the country, including 941 public and 75 private two-year institutions.1 Community colleges were designed to serve their geographic region by providing affordable and convenient entry points to higher education, and throughout their history they have held access, success, and social mobility at the heart of their shared mission. Unlike their four-year baccalaureate and doctoral counterparts that often devote significant resources to research, teaching is the central academic focus of most two-year institutions. Community colleges award associate degrees and industry-recognized certificates, with an emphasis on imparting knowledge, skills, and technical training to prepare students to transfer to four-year institutions or directly enter the local labor market. These varied offerings reflect both the wide range of educational, career, and personal outcomes that students seek from community colleges, and the many on- and off-ramps they may take along the way.

In addition to enrolling a significant percentage—41%—of all US undergraduates, community colleges disproportionately serve students of color, working adults, and other student populations that have conventionally been classified as “non-traditional” but today represent a growing and critical share of the nation’s college students. Among all undergraduates, 56% of Native Americans, 52% of Hispanics, 42% of blacks, and 39% of Asian and Pacific Islanders were enrolled in community colleges in fall 2017.2 Over 60% of full-time community college students also work part- or full-time, as do over 70% of those enrolled part-time.3Twenty-nine percent are the first generation in their family to attend college, 20% are students with disabilities, and 5% are military veterans.4 And of the more than one in five US undergraduates who are student parents, 42% attend community colleges—the largest share enrolled in any higher education sector.5

Due to their commitment to open access and affordability, community colleges often also represent a gateway to economic opportunity for students from low- and middle-income backgrounds. Over a third of community college students (34%) receive federal aid based on demonstrated financial need like the Pell Grant and the Federal Supplemental Educational Opportunity Grant (FSEOG), with over 70% of dependent students who receive those grants coming from families with incomes of less than $30,000.6Federal financial aid operates on a voucher system, with grant money following students to the institutions in which they enroll, and a large proportion of federal aid recipients choose to attend community colleges. Pell Grants represented over one-third of all federal aid received by two-year institutions in the 2017-2018 academic year and FSEOG represented another quarter.7

The social and labor market opportunities that community colleges provide to the diverse student populations they serve take many forms. For the more than 80% of entering students who express a desire to go on to pursue a bachelor’s degree, community colleges play a vital role in providing access to the general education courses, like college-level English and math, that they need in order to transfer to a four-year institution.8But not all community college students follow a straight line to an associate degree or transfer pathway. Community colleges also work closely with local business and industry leaders to support the immediate training needs of the regional workforce. Working adults and GED recipients often turn to community colleges when they need upskilling or formal certification to continue or advance in their jobs, want to make a career shift into a new industry, or aspire to complete postsecondary credits started years earlier. A growing number of community colleges also offer dual enrollment programs in partnership with area high schools, and some four-year college students enrolled at other institutions pursue additional credits at community colleges during the summer months or over school breaks. While they may look very different, each of these student pathways into and through community colleges represents an element of mission fulfillment for institutions in the two-year sector.

How We Report Community College Outcomes Now

The need for access to better quality data has become a flashpoint in the national higher education conversation over the past decade. Institutions that receive federal funding are required to report data to the government each year, and that data is increasingly being made available to the public to help prospective students and their families make informed choices about where they will get the best return on their educational investment. In addition to promoting better transparency for consumers, having quality data that captures a larger proportion of students and more fully represents how well institutions are serving their constituents is essential to fostering improvement and innovation across the US higher education system.

The primary federal source of student outcomes data for all sectors of higher education is the Integrated Postsecondary Education Data System (IPEDS), which collects data annually from the more than 7,500 institutions that participate in federal financial aid programs. In addition to basic information like whether an institution is public or private and the types of programs it offers, IPEDS collects institution-level data on both inputs (for example, enrollment headcounts, tuition and fees charged, and average amounts of aid received by students) and outputs (including first-year retention and graduation rates). In 2017, the Department of Education (Department) introduced an update to IPEDS reporting known as the Outcomes Measures (OM) survey that addressed several longstanding points of IPEDS frustration for community colleges, including publishing outcomes for both part-time and non-first-time students at all two- and four-year institutions, expanding the student tracking period to eight years, and disaggregating data on Pell Grant recipients.9

While the introduction of the OM survey represented a step forward, the current federal ban on student-level data imposed through amendments to the HEA in 2008 has perpetuated information gaps, many of which disproportionately impact community colleges. To name a few: students classified as “full-time” by OM are enrolled full-time during their first term, but may later alter their enrollment intensity (switching from or between part- and full-time status); OM does not distinguish between degrees and other credentials like industry-recognized certificates; and OM excludes transfer students who completed a credential at a community college prior to transfer.10

To fill in some of the gaps left behind by IPEDS, other entities both internal and external to the federal government lead national data collection efforts to expand upon the available data. Among them are the College Scorecard, the National Student Clearinghouse (NSC), and the Voluntary Framework of Agreement (VFA)—each of which will factor into our analysis in the following sections of this paper. The College Scorecard is a consumer-facing website administered by the Department that builds on components from IPEDS and the National Student Loan Data System (NSLDS) to provide institution-level data on college costs, graduation rates, and student borrowing. This includes information on the median debt of a college’s borrowers, the share of borrowers who are in repayment, and the amount of their typical monthly payment. However, the College Scorecard limits its outcomes reporting to federal loan holders who completed college, and entirely omits data on non-borrowing students. That means it fails to account for the roughly 87% of community college students nationally who don’t take out loans to finance their education, severely limiting the tool’s usefulness for two-year schools.11

Outside the government, the NSC contracts with over 3,600 colleges and maintains a student-level database that tracks outcomes over a six-year period. This data can be especially useful for institutions with a lot of transfer activity because of the ability to track student movement (including across state lines) with greater precision. On the flip side, there are also significant limitations on the usability of NSC data, namely that without federal participation requirements, institutions have little incentive to share their data publicly, making it difficult to know how well they are delivering desired outcomes for their students.

Lastly, specific to the two-year sector, the VFA is an accountability framework designed for and by community colleges that tracks student outcomes across nine mutually-exclusive measures related to completion, transfer, and enrollment behavior.12Like the NSC and as its name implies, participation in the VFA is voluntary; in the 2018 data collection cycle, only 200 schools—or roughly one-fifth of community colleges nationwide—participated.13While the resulting data is limited by this small percentage of participating colleges, the VFA offers insight into the ways that community college leaders and advocates believe that traditional outcomes metrics can be differentiated or enhanced to better serve their sector.

The challenges associated with collecting and analyzing meaningful information about community college outcomes and value propositions (and the variety of sources working to fill in the gaps) underscore broader calls for better quality data within the higher education community. However, these limitations cannot and should not prevent ongoing efforts to more fully understand the value community colleges provide, especially in light of the critical student populations they serve and the taxpayer support they receive. Efforts to strengthen data collection to expand conversations around student success, inform college choice, and promote greater accountability are currently underway at the federal level, most notably through proposed legislation like the bipartisan College Transparency Act (CTA), which would lift the ban on a student-level data system.14

Better Metrics, Better Picture: A Menu of Options to Effectively Measure Community College Outcomes

In the analysis that follows, we examine several of the most common student outcomes metrics, focusing on both the ways in which their typical design provides useful data points and how their current iterations may paint an incomplete picture when it comes to the two-year sector. We categorize these metrics into five groupings:

1. Completion Outcomes

2. Transfer Outcomes

3. Employment and Earnings Outcomes

4. Student Debt Outcomes

5. Equity Outcomes

Within each category, we discuss how multiple related metrics could fill in gaps in the current design to provide more nuanced data points for community college improvement and accountability. Recognizing the significant variation in the metrics collected by the federal government, state coordinating boards, external entities, and institutions themselves, we focus specifically on opportunities to maximize clarity and utility within the federal data system, and on how lawmakers can most effectively shape conversations around community college outcomes during the next reauthorization of the HEA or future reforms to federal data collection processes. Any of the individual options outlined here would represent a step forward in the way we currently report community college outcomes, and a single larger policy change like the passage of the CTA would represent a giant leap in the usability of federal data.

Given the diverse populations served by community colleges, it is essential that the data points for each metric proposed in this paper be disaggregated by a number of student characteristics. All, not just some, data must be disaggregated by race and ethnicity to allow for a meaningful understanding of how equitably community colleges serve students of different racial backgrounds. Since the 2017 IPEDS update, data are now disaggregated to reflect outcomes for Pell Grant recipients—an important reference point for community colleges—but further disaggregation by income quintile, rather than just Pell status, would introduce additional equity markers. Future data improvements could add value by enabling the disaggregation of data points by student age range to account for the growing proportion of non-traditional aged students in higher education, as well as by gender identity, program area or major, and credential-seeking status.

Most higher education data is currently limited to students who are classified as degree-seeking, which is an eligibility requirement for receiving federal student aid. We focus specifically on the degree-seeking population in this paper because it also encompasses the majority of community college students, however separate policy discussions about how to better understand, represent, and hold institutions accountable for the outcomes of their non-degree seeking students—and what data are needed to do so effectively—are also warranted. Each of these improvements to federal data collection would have the result of centering equity within the broader conversation on student outcomes and creating a clearer image of institutional performance.

1. Completion Outcomes

Enhanced Graduation Rate

Today, an institution’s graduation rate serves as one of the primary measures used to assess how well the school is serving its students, and for good reason: degree completion, not just degree pursuit, is tied to higher lifetime earnings and a bevy of other positive externalities for students, such as lower unemployment rates, higher levels of civic engagement, and a lower chance of defaulting on student loans. To measure an institution’s success at meeting this important benchmark, the statutorily-required federal graduation rate looks at degree completion within 150% of the “normal” time it takes to complete a degree—six years for bachelor’s degree-granting institutions and three for community colleges. According to federal data, the three-year graduation rate for the cohort of students that entered community college in 2014 was 33.9%, and since 1999, that figure has never surpassed 35%.15

In addition to the critique that this figure primarily accounts for first-time, full-time students (only a small sliver of the community college demographic), a common counterargument against the way we currently measure completion at community colleges is that three years is not a long enough timeframe. That’s why many community colleges have advocated for an additional graduation metric of 300% of time (or six years) for the two-year sector, which the VFA and NSC already track. On principle, this makes sense: we know that the pathway to a degree looks different for community college students, who are often enrolled part-time or working in addition to their studies and may take more time to complete. But neither VFA nor NSC data show any significant differences in completion outcomes at the six-year mark than IPEDS reflects after three years. Data from the 200 institutions participating in the VFA for the cohort who entered a two-year institution in fall 2011 show a median six-year completion rate of just 23.3% for all students—even lower than the national figure—and 37.2% when limited to the credential-seeking cohort.16And the NSC data from 3,600 institutions shows a six-year associate degree completion rate of 29% for the cohort of students who entered public community colleges in fall 2010.17 While there is some variation in the ways that IPEDS, VFA, and NSC data are collected and reported, these statistics illustrate that a secondary 300% of time graduation rate metric would not be a silver bullet to improve the optics around community college performance. More importantly, it would do nothing to account for measuring the opportunity costs of students spending six years obtaining a degree that is designed to take only two.

Instead, we may be able to derive more actionable data on student success from looking at combined measures that account for a variety of mission-aligned positive outcomes for community colleges—such as a three-pronged “enhanced” graduation rate metric that sums the percentage of students in a given cohort that receive a credential, transfer to another institution without receiving a credential, or remain enrolled at the institution. While all three composite elements remain important, this approach can provide a more nuanced look at overall performance than any of them taken alone. The VFA collects such a statistic, and the data reflects that 52.7% of students who entered a reporting institution in 2011 fall into one of these three categories—graduation, transfer without credential, or persistence—compared to just 23.3% who graduated within the same time period.18 Similarly, NSC data indicates that 53.8% of students who entered a reporting two-year public institution in 2012 had either completed at their original institution, completed at a different two- or four-year institution, or were still enrolled at any institution after six years.19

Term-to-Term Persistence

A commonly used indicator of student progress is the first-year retention rate, which is a federal metric that tracks the percentage of students who return to the same institution in the fall following their first year of enrollment. As a standalone metric, the first-year retention rate is limited, and especially so for community colleges, because it includes only first-time, full-time, degree-seeking students. By failing to account for part-time enrollees, transfers, and second-year students, the traditional first-year retention rate is ill-equipped to provide adequate information on the more mobile population of community college students.

Moreover, the federal data system only tracks retention within the same institution—in other words, the federal government is fully capable of tracking a student who returns to the same institution at which they were enrolled during the previous academic year, but may not be able to track a student who spends one year at a community college but eventually ends up enrolling somewhere else. Comparatively, the persistence rate is a metric that, while often measured in terms of fall-to-fall enrollment like the retention rate, also includes students who continue their education at a different two- or four-year postsecondary institution than the one at which they first enrolled.20 Persistence rates will therefore typically be higher than retention rates: the NSC reports an overall (full- and part-time) persistence rate of 60% for students who started at a two-year public college in fall 2014, compared to a retention rate of 48.5%.21 While the federal government, through the Beginning Postsecondary Students Longitudinal Study (BPS) does record a persistence measure, BPS looks specifically at whether a student is still enrolled at any institution after six years and does not provide institution-level data, which limits its value.22

Related indicators that measure “term-to-term” persistence are especially well-suited for two-year institutions and the populations they serve. The term-to-term persistence rate measures the percentage of students who are retained at any institution in the following term (for example, from the fall to spring or spring to summer semesters), as opposed to the following academic year (which strictly looks at the rate of return from fall to fall). With a growing share of students enrolling in courses year-round, many institutions already choose to track term-to-term persistence rates for their degree-seeking students, often to supplement federal metrics by keeping tabs on student enrollment patterns throughout the full calendar year. From a policy perspective, tracking and making publicly available data on term-to-term persistence would offer consumers a more accurate snapshot of how well a community college is serving its students, especially since persistence rates have a role to play in illustrating return on investment: if a large percentage of students at an institution consistently fail to persist to the following term, low completion rates are sure to follow.

Institutions that collect this data often do so for their own improvement purposes. From an institutional standpoint, student-level tracking data can inform advising campaigns focused on persistence, or lead to examination of structural barriers that may be preventing students from returning for the next semester. In this way, mandating a federal term-to-term persistence metric could have the added bonus of encouraging institutions to look more closely at practices or policies—like unexpected registration fees, course scheduling issues, or credit loss during the transfer process—that are contributing to students stopping out, and take action to address them.

Leading Indicators

In addition to looking at completion, retention, and persistence rates, a growing body of research on community college success has honed in on specific enrollment and attainment behaviors during the first year of college that are correlated with higher graduation rates down the line. Two-year colleges in particular stand to benefit from metrics that can predict student outcomes early on, as tracking these “leading indicators” can allow for timelier assessment of institutional reform efforts.

Researchers from the Community College Research Center (CCRC) at Teachers College, Columbia University point to three categories of first-year “momentum” metrics that can serve as powerful indicators of a student’s likelihood of graduating: credit momentum, gateway course momentum, and persistence momentum.23 In their research, credit momentum is broken down into the number of college credits a student accumulates in their first semester (6 or 12) and first year (15, 24, or 30) of study, gateway course momentum measures the rate at which students complete either or both college-level math and English courses during the first year, and persistence momentum looks at retention between the first and second term (as discussed in the previous section).24 In a study examining student progress in these areas from all community colleges in three states, CCRC simulated what longer-term completion outcomes would look like if more students reached specific milestones in year one, finding that while individual metrics are correlated with completion to varying degrees, higher attainment within any of these leading indicators is predicted to improve student outcomes overall.25

As with term-to-term persistence, variations on these early momentum metrics are gaining traction among institutions and states for their own data collection and strategic improvement initiatives, in part because they can be used to monitor the effectiveness of reforms over a shorter period of time. Within the federal context, leading indicators can be used to inform the national conversation about how and on what timeframe we measure institutional performance, or as early warning signs to accreditors about institutions that require additional support.

Credits to Degree

Affordability and access have always been hallmarks of a community college education—so when students end up earning more credits than they actually need for a two-year degree, it costs them unnecessary time and money and erodes these central commitments.

Credits to degree is a metric that reflects degree efficiency: if an associate degree requires 60 credits but the average number of credits students accumulate before earning a diploma exceeds that, students are ultimately paying for more courses than they needed. Complete College America (CCA), a policy and advocacy organization focused on college completion at the state and system level, was the first entity to develop a national data collection initiative to track completion-focused metrics. CCA reports that first-time, full- or part-time community college students accumulate an average of 82.2 credits before receiving an associate degree, for a total of 20.2 excess credits to degree.26This can happen for a number of reasons—for example, poor advising, excess remedial or prerequisite coursework, change of major, taking unnecessary courses to maintain full-time standing for Pell eligibility, or credit loss through transfer can all contribute to inefficiencies in the degree pathway. And those extra credits are costly: based on a 15-credit semester and the average tuition and fees at public two-year institutions as reported by the College Board ($3,660), an excess 20 credits could cost a community college student an additional $2,464.27 That’s roughly two-thirds the cost of enrolling for a full extra, unnecessary year—not to mention the opportunity costs of lost earning potential associated with extended enrollment.

The Department currently measures a “time to degree” estimate, which addresses the same core issues as the credits to degree metric but differs in a few key ways. First, the Department limits its reporting to first-time, full-time, degree-seeking students, excluding a large population of community college students. Measuring credits to degree also eliminates the need to reconcile differing definitions of the academic year, making it better suited to institutions that serve highly mobile students who, for example, enroll in summer or winter term courses with greater frequency. Importantly, because community college students may take longer to complete a degree, looking at credits rather than time to degree shifts the conversation away from a limiting focus on years of enrollment and toward a more comprehensive focus on the efficiency of the degree pathway. In this way, the credits to degree metric is better equipped to shine a light on the factors that contribute to inefficiencies and, in doing so, promote greater institutional accountability for helping students cross the finish line on time.

2. Transfer Outcomes

Transfer Rate

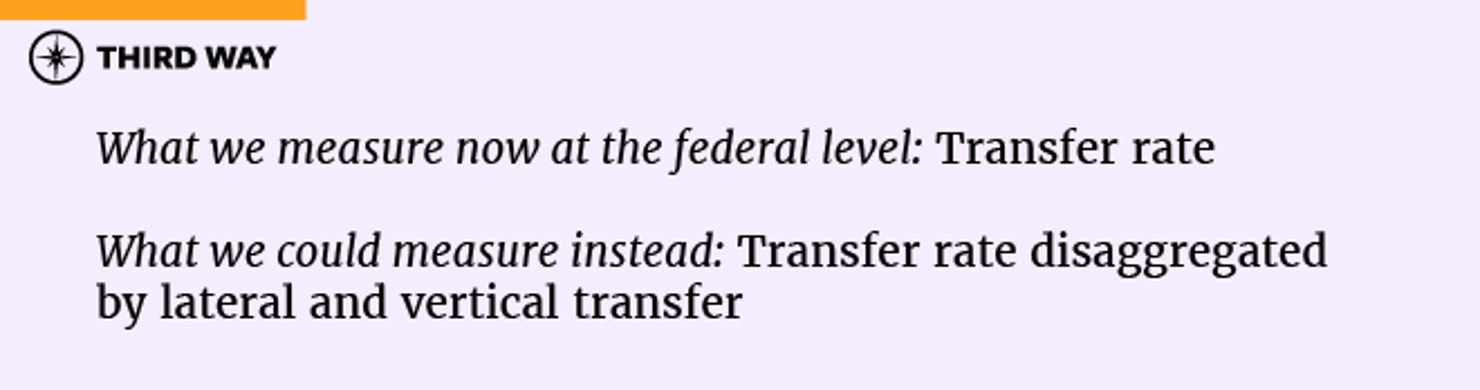

With the vast majority (about 80%) of entering community college students expressing an intent to pursue a bachelor’s or higher degree, preparing students for transfer is at the forefront of a community college’s mission.28Successful transfer is predicated on a number of factors, among them strong advising systems and statewide articulation agreements between two- and four-year institutions that guarantee a student’s credits will be accepted upon transfer. To be clear, this burden does not fall solely on the shoulders of community colleges: the two- and four-year sectors must work together—and in partnership with the state—to establish clear pathways between institutions that efficiently lead to a degree. That being said, an institution’s transfer rate is a simple and useful metric for tracking the percentage of students who successfully transfer into another institution. Over one-third of all students transfer over the course of their postsecondary education, making this metric essential in any conversation about college outcomes.29 However, to strengthen the transfer rate metric for community college assessment, it is informative to disaggregate lateral transfers (from one two-year institution to another) and vertical transfers (from a two-year to a four-year institution), which the NSC already does. According to their data, the overall six-year transfer rate for students who entered a public two-year institution in fall 2008 was 39.6%, with 24.4% of students transferring vertically to a four-year institution and 15.2% transferring laterally to another two-year college.30 While the motivations for vertical transfer are largely self-explanatory, the motivations for lateral transfer are less clear. Transfer to another two-year institution could be due to a geographic move or a change in desired major or program, but it could also underscore broader institutional issues like subpar advising or inadequate access to required courses or remedial pathways. Incorporating the direction of transfer in the federal transfer rate could help the government and accreditors identify institutions with high proportions of students transferring laterally, which could signal a pattern of unmet student needs.

Transfer Performance

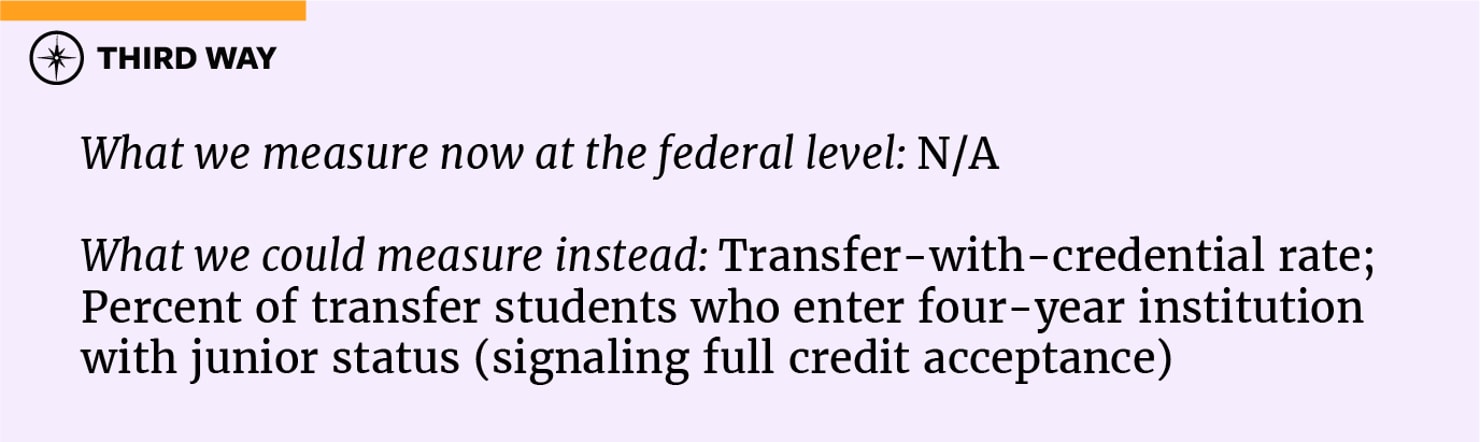

The transfer rate alone is insufficient to provide full context about how well community colleges are preparing transfers-out to succeed in their pursuit of a bachelor’s degree. Though not currently collected at the federal level, supplementary indicators of transfer performance can inject nuance into our understanding of successful transfer pathways. One such indicator is the percentage of transfer students who transfer with a credential. Researchers from CCRC have shown that students who earn an associate degree prior to transfer are 49% more likely to go on to earn a bachelor’s degree within four years than those who transfer with a comparable number of credits but no degree.31

Looking at the rate at which an institution’s transfer students typically depart with a credential in hand, then, can offer useful insight on the likelihood of transfer success. The NSC reports that over a third—36%–of students who started at a two-year institution transferred out before obtaining an associate degree, a troubling predictor of longer-term bachelor’s degree completion rates.32 Critically, 19% of community college transfers moved to an out-of-state institution, placing them beyond the collection range of state longitudinal tracking systems and making it harder to obtain reliable data on their degree progress.33Federal collection of accurate transfer-with-credential rates would rely on the creation of a student-level data system, but stands to add significant value as a student-centered measure of success for the two-year sector.

Another transfer performance measure that could be tracked at the state level to complement student-level federal data is the rate at which an institution’s transfer-out students enter their new institution as a junior, a statistic that reflects whether the credits accumulated at the originating institution were successfully accepted by the receiving institution. A 2017 report by the Government Accountability Office (GAO) revealed that students lost an average of 43% of their earned credits during the transfer process—an unacceptable outcome that can contribute to lower completion rates and unnecessary costs for students.34 For example, the Campaign for College Opportunity found that transfer students from the California Community Colleges system (the largest in the country) paid $36,000-$38,000 more than they otherwise would have on their pathway to earning a bachelor’s degree.35Poor transfer performance is also costly to institutions, with the same study noting that up to $41 million in California state funds and over 10,000 additional enrollment spots could be made available if the transfer process was easier and more efficient.36

Accordingly, looking at the percentage of a state’s public institutions that participate in articulation agreements can supplement federal data by providing insight on the complexity of transfer and the level of state and institutional commitment to maintaining strong transfer pathways. The Education Commission of the States reports that more than 30 states have instituted policies guaranteeing that students who receive an associate degree at a public two-year institution prior to transfer to a public four-year institution can transfer all earned lower-division course credits to the four-year institution, but the policy details matter a great deal in terms of student outcomes.37Tracking the percentage of transfers-out who are able to enter a four-year institution as juniors can therefore serve as an indicator of not only successful and efficient transfer preparation at the two-year institution, but also a predictor of subsequent bachelor’s degree attainment.

3. Employment and Earnings Outcomes

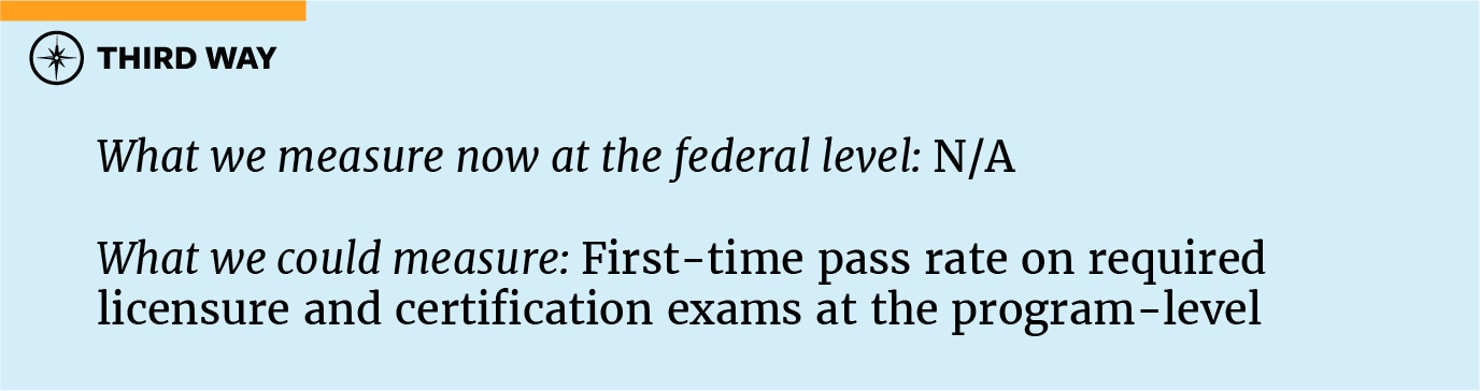

First-time Pass Rate for Industry Licensure or Certification Examinations

In addition to the traditional Associate of Arts (AA) and Associate of Science (AS) degrees, many community colleges offer workforce training programs designed with the specific goal of preparing students to pass a required licensure or certification examination. Often, these examinations allow the student to obtain an industry-recognized credential that can lead to an immediate earnings boost or promotion in their field. Tracking the pass rate for such examinations is therefore a logical and meaningful metric in monitoring the outcomes of workforce training programs at community colleges. Further focusing on first-time pass rates can prove illustrative: if students do not pass a licensure examination on the first try and need to invest additional time and resources into preparation, it raises questions about the quality of the program in which they enrolled and the value they received from their tuition dollars.

Many institutions with programs that fall under the purview of the federal Workforce Innovation and Opportunity Act (WIOA) are already required to conduct initial data collection on skill gains through these programs, including exam pass rates, and many states also independently collect this information. The North Carolina Community College System, for example, publishes an annual Performance Measures for Student Success Report that includes a Licensure and Certification Passing Rate Dashboard.38 The public, interactive data set allows users to track the year-over-year pass rate for first-time licensure and certification exam test-takers, either for the 58-college system as a whole or for individual institutions. The data covers program-level pass rates for 24 state-mandated exams, ranging from aviation to dental hygiene to nursing, which practitioners must pass in order to work in a specific field. By measuring success at the program-level rather than only for the institution overall, this approach allows for more targeted assessment of program quality—a conversation already happening at the federal level today. While both WIOA requirements and state-based reporting mechanisms are useful, they don’t negate the need to include similar provisions in the HEA: these data points directly reflect a student’s return on investment and should be tracked for all schools and programs receiving Title IV dollars.

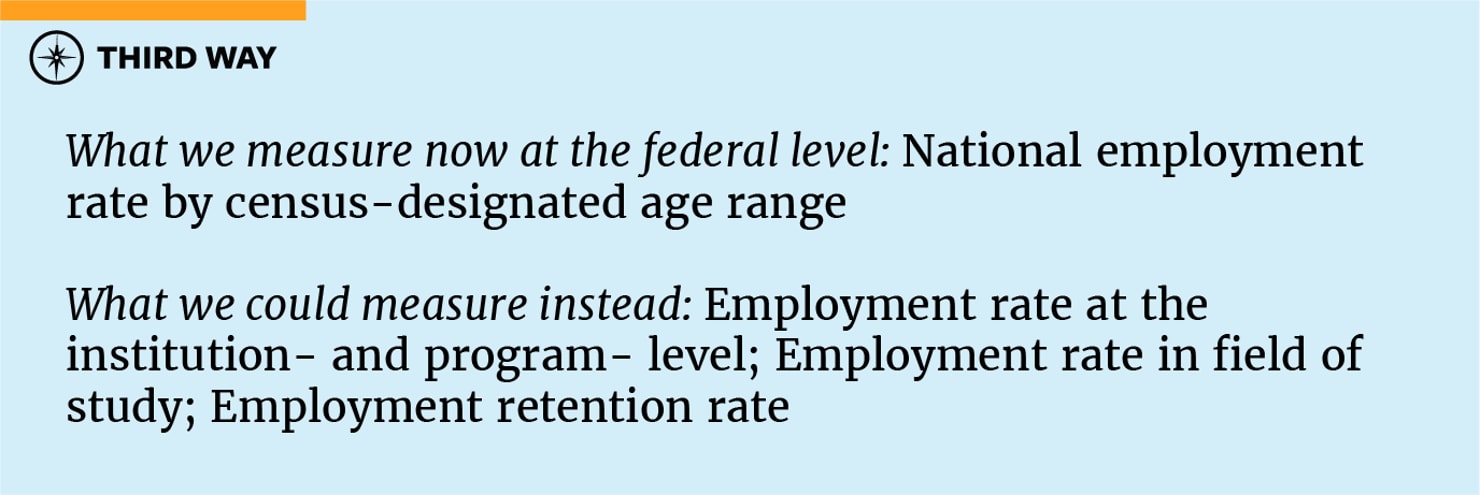

Employment Rate

The majority of students pursue postsecondary education with the goal of improving their employment prospects and finding a good job. Looking to the national data, we see that on average, individuals who earn a college degree are more likely to be employed and have higher earnings than those with no degree.39 But not all programs and schools are delivering the same returns for students. That’s why developing mechanisms to effectively track the employment rate of college graduates is important, if not always straightforward. Calculating institutional employment rates relies on matching data points from a combination of sources, often including federal data from IPEDS and the US Census, state unemployment insurance (UI) data records, NSC or other third-party sources, and institutional enrollment records or surveys of graduates.

While IPEDS publishes overall employment rates based on age and level of educational attainment, it is essential to have employment rate data available at the institutional level as well: it is a clear and easy-to-understand metric that offers baseline insight into the value associated with a student’s educational investment at a given institution. But there are also modifications and extensions of the standard employment rate that can add nuance to the conversation. One such adjustment is drilling in on the rate at which graduates find employment in their field. Tracking this metric often relies on student survey responses, which inherently injects some variability into the results, and the definition of what constitutes a “job in one’s field of study” can be inconsistent, difficult to define, or out of touch with the realities of the workforce. Even so, the metric remains useful for the two-year sector, where many programs tend to be career-oriented and focused on preparing students for highly specific types of work.

Looking at the employment rate of former students at different intervals following their departure from community college—for example, at 5 and 10 years out of school—is another way to add depth to employment data by highlighting job retention over a longer period of time. This type of periodic outcomes tracking isn’t unfamiliar to community colleges, as institutions that offer workforce development programs subject to WIOA guidelines already report on several indicators of program performance, including former students’ employment rates during the second and fourth quarters after their exit from the program.40 Since community colleges constitute the majority of institutions that offer programs under WIOA, adding this type of requirement at the federal level through other legislation would constitute an extension of existing institutional reporting efforts, rather than an introduction of new mandates.

Post-Enrollment Earnings

Like employment rates, measuring earnings relies on a combination of data sources, including the Department of the Treasury’s administrative tax records, which can be linked to data on federal student aid recipients to produce institution-level estimates of the average and median earnings of a school’s former students.

But looking at average or median earnings data alone, while simple, is less than illuminating; for one, it fails to connect earnings figures to a local or statewide average or median income benchmark. Doing so is especially essential for evaluating the two-year college sector, where students typically enroll in the community college closest to their home. A more useful earnings threshold metric used by the Department is the percentage of an institution’s former students making more than $28,000—roughly the median income of 25-34 year olds with only a high school diploma—six years after entering the institution. Right now, the median percentage of students from two-year colleges who earn above the average high school graduate is just 45%, with over 70% of community colleges leaving the majority of their students earning less than $28,000.41

In a similar vein, a number of statewide community college systems track the proportion of an institution’s former students who attain a living wage for their district or county within one year of exiting the institution, an indicator of how well an institution’s credentials perform in the local labor market. The number one reason that students enroll in higher education is to be able to find a well-paying job; their chances of earning more than they could have without attending community college at all and of making enough to live comfortably in their regional area are two discrete ways to measure whether an institution is providing a real return on investment to students.

Metrics related to earnings growth can also shed light on the impact that additional education attained has had an individual’s earnings. This can be done in a variety of ways. The California Community Colleges’ Career Technical Education survey, for example, tracks the median change in earnings from the second quarter before the academic year in which the student entered an institution to the second quarter after the academic year in which they exited their last institution of record, and WIOA reporting requirements include tracking the median earnings of program participants in the second quarter after leaving an institution. Earnings growth is an informative but imperfect measure: if a student enters college after a period of unemployment, any level of income will reflect a significant earnings gain. Because of this inherent vulnerability, earnings and employment metrics are most useful when viewed in conjunction with a variety of related metrics that can together point to trends in student outcomes.

4. Student Debt Outcomes

Cohort Default and Loan Repayment Rates

Whether an institution’s students are able to pay down the loan debt they took on in order to pay for their education is an important signal of how well that institution is delivering on its promises. Within the community college sector, a smaller proportion of students (13%) borrow than at four-year institutions, and those who do borrow tend to borrow smaller sums. For example, 51% of students who completed an associate degree and 33% of those who completed a certificate program in 2015-2016 did so with zero debt, and the largest proportion of borrowers took out less than $10,000 in loans.42

To be clear, borrowing for college is not a bad thing. College graduates experience strong wage premiums that extend over the course of their lifetimes, and some research indicates that that community college students who borrow more actually fare better academically and are less likely to default.43Accordingly, the lower rate and level of borrowing in the community college sector doesn’t diminish the importance of tracking student borrowing and loan repayment at the federal level, especially because we know that the students who are most at risk of defaulting on their loans are those who borrowed smaller amounts.44 For the cohort of public community college borrowers who entered repayment in fiscal year 2015, the three-year default rate was 16.7%, with a higher number of borrowers in default than any other institutional type.45 This underscores the significant repayment concerns plaguing the two-year sector for those who do borrow: five years after leaving a community college, the majority of borrowers at 68% of two-year institutions actually owed more on their student loans than they took out in the first place.46

The federal government has traditionally measured student debt using the cohort default rate (CDR), a metric that tracks the percentage of students who default on their federal student loans within three years of leaving the college. Institutional sanctions kick in (risking access to federal financial aid dollars) if 30% of a school’s students default for three years in a row or 40% default in a single year. The loan repayment rate is another metric that has factored into HEA reauthorization conversations, and while conceptually similar to CDR, repayment rates measure something slightly different: the percentage of a school’s borrowers that are able to start paying down the principal on their federal loans.47 The idea behind looking at repayment rates is that repayment is a less “gameable” metric—schools have figured out workarounds to help students avoid default for the window in which CDR is measured, like encouraging them to enter forbearance or income-based repayment plans. In reality, focusing on either metric alone can be misleading, and looking at both CDR and repayment rates presents a clearer picture of student success.

Community college advocates have long encouraged adjustments to CDR that would account for the sector’s lower rate of borrowing. Some current legislative proposals would address this concern by multiplying an institution’s CDR by the percentage of students at that school who borrow federal loans in the relevant fiscal year to create an “adjusted” cohort default rate. Applying the adjusted CDR only to institutions whose rate of student borrowing is below a certain threshold could add a further level of depth to this conversation and avoid lowering the overall bar (which could result in fewer bad actors being captured by CDR). These additional lenses on borrowing and debt could help better elucidate a student’s risk of defaulting on their loans after attending a particular two-year institution—valuable information for prospective students and their families, as well as for accreditors, who are supposed to be monitoring institutional performance.

5. Equity Outcomes

Enrollment and Completion Gaps

As open access institutions deeply connected to the educational and labor market needs of the regions they serve, community colleges are well-positioned to be national leaders in increasing equity in student outcomes. Closing equity gaps in the higher education system demands targeted, long-term commitment and resource allocation, and responsibility for these efforts must be shared among institutions, states, and the federal government.

A critical baseline step is to ensure that all student success data points are disaggregated by race, ethnicity, and income quintile. Disaggregation on its own does not serve to address racial equity gaps or the structural conditions that contribute to them, but it does provide actionable information on how well institutions are serving their students of color and low-income students, and where reform efforts and additional funding should be directed. In the absence of federal leadership, many institutions and community college systems have started to enact policies and strategic plans aimed at identifying and closing these achievement gaps. Tracking institution-level outcomes metrics that advance equity goals within the federal data system would give this work the central position it warrants within the national conversation on access, equity, and success in higher education.

Two important equity outcomes metrics for community colleges are an institution’s enrollment equity—how well the participation rate of students of color and other underserved populations reflects the demographics of the surrounding community—and its completion gaps—the difference between the degree completion rate of students of color and that of their white peers. One state system that has prioritized equity in its data collection and reporting is the California Community Colleges system, which publishes an annual Student Success Scorecard that tracks statewide and institution-level student performance across its 115 public community colleges. As a condition to receive specific state funding streams, the California legislature also requires community college districts to maintain a three-year Student Equity Plan and conduct campus-based research on equity gaps, with data disaggregated to show disparate impact in access, retention, and completion for several student subpopulations.48

While targeted institutional and state-level planning is absolutely necessary to make progress on closing gaps in college access and success, the federal government also has a major role to play in recalibrating its data system to increase transparency and ensure that federally-funded institutions are serving students equitably. In addition to disaggregating data and monitoring percentage point gaps in achievement by race and ethnicity, the federal data system should be equipped to track institution-level student outcomes by age range, gender identity, first-generation and veteran status, enrollment intensity, and income quintile. Creating a student-level data system and incorporating federal outcomes metrics focused on equity is an essential step forward, and a particularly vital step for the two-year sector, which serves a significant and diverse population of students and their communities.

Conclusion

Community colleges play a distinct and critical role within US higher education. The two-year sector offers accessible, affordable postsecondary pathways for millions of students, but federal data collection systems often fall short of presenting a clear picture of community college performance—leaving students and taxpayers with insufficient information on whether institutions are delivering a return on their investment.

Having a fuller picture of outcomes data is necessary for promoting transparency, improvement, and accountability across the higher education system. This is acutely important for the largely open-access two-year sector, where students often experience less choice. For prospective students, the community college located in their district or county is typically the single most convenient and most affordable option, so it is imperative that every community college be held to the highest standard for serving students in its jurisdiction well and preparing them for a successful future. And as many states look to expand tuition-free options at their public two year schools, a growing share of students may select community colleges, making it even more urgent to ensure that they hold up their end of the bargain.

With the next reauthorization of the Higher Education Act on the horizon, federal policymakers have an opportunity to reexamine what success means for community colleges and how federal outcomes reporting can provide more meaningful data for both two- and four-year institutions. Looking to multiple, disaggregated measures of student success is essential, and the establishment of a federal student-level data system as proposed in the College Transparency Act would significantly enhance our understanding of institutional performance. Not only will such efforts help better align federal outcomes metrics with key distinctions between two- and four-year schools, but they will also unlock insights to promote institutional improvement and ensure that community colleges are providing real value for students and taxpayers.